AI Performance Benchmarking: Testing Ball Bounce Dynamics in Rotating Shapes

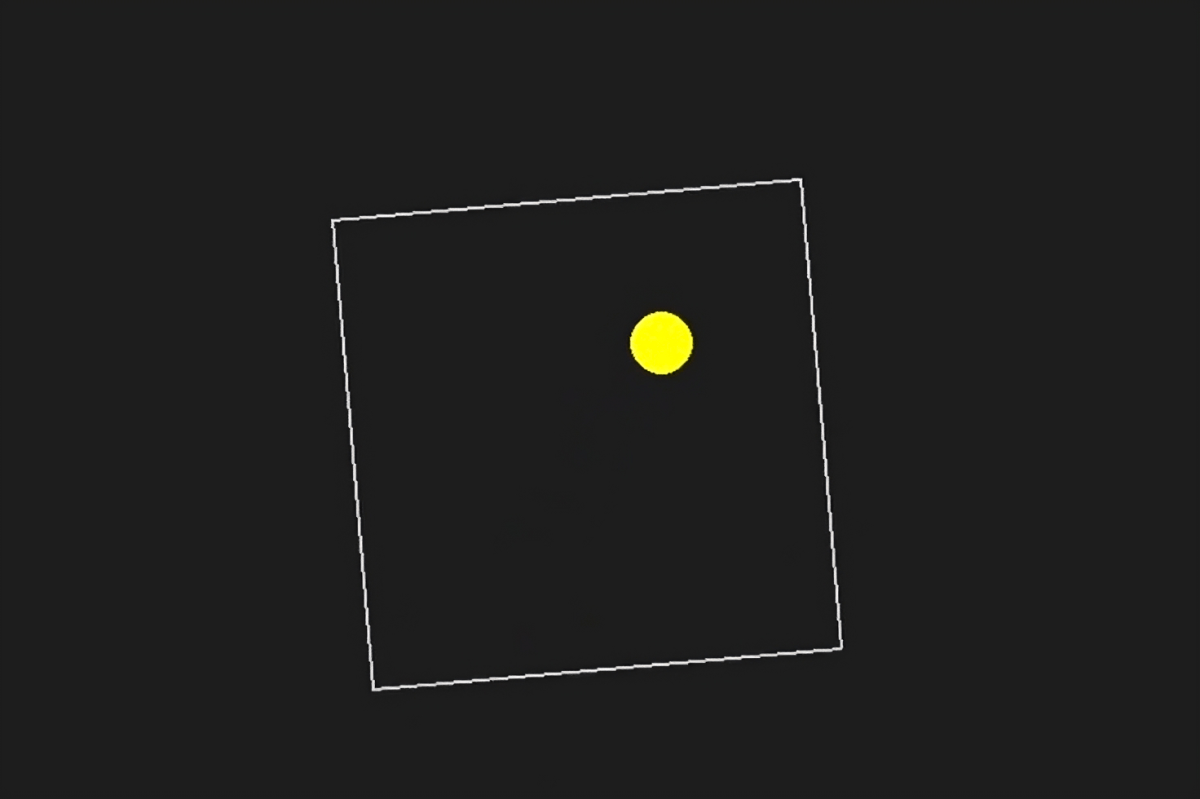

As the landscape of artificial intelligence evolves, the popularity of unconventional AI benchmarks continues to rise. Recently, the AI community on X has focused on a peculiar test involving various AI models, particularly reasoning models, and their ability to execute a specific programming task: creating a Python script for a bouncing yellow ball within a rotating shape.

Comparing AI Models: The Bouncing Ball Challenge

This intriguing benchmark has revealed varying performances among different AI models. For instance, a user on X highlighted that the freely available DeepSeek R1 model outperformed OpenAI’s o1 pro mode, which is priced at $200 per month as part of OpenAI’s ChatGPT Pro plan.

👀 DeepSeek R1 (right) crushed o1-pro (left) 👀

Prompt: “Write a Python script for a bouncing yellow ball within a square. Ensure collision detection is handled properly, and the square rotates slowly while the ball remains inside.”

Performance Insights

According to reports from X users, other models, such as Anthropic’s Claude 3.5 Sonnet and Google’s Gemini 1.5 Pro, struggled with the physics of the task, allowing the ball to escape the shape. Conversely, models like Google’s Gemini 2.0 Flash Thinking Experimental and even the older GPT-4o from OpenAI succeeded in the challenge.

- Top Performer: DeepSeek R1

- Second Place: Sonar Huge

- Third Place: GPT-4o

- Lowest Score: OpenAI o1 – completely misunderstood the task

The Significance of the Bouncing Ball Simulation

But what does this experiment really reveal about AI capabilities? Simulating a bouncing ball is a well-known programming challenge. It requires the integration of collision detection algorithms that determine when two objects collide, such as the ball and the shape’s boundary.

As noted by X user N8 Programs, a researcher at AI startup Nous Research, creating a bouncing ball in a rotating heptagon took him approximately two hours. He emphasized the complexities involved in tracking multiple coordinate systems and ensuring robust collision detection within each system.

The Limitations of AI Benchmarks

While this bouncing ball test offers insights into programming skills, it falls short as a comprehensive AI benchmark. Variations in prompts can lead to significantly different outcomes, which explains why some users report better experiences with different models.

Viral tests like this highlight the ongoing challenge of establishing effective measurement systems for AI models. It’s often unclear what sets one model apart from another, especially when relying on niche benchmarks that may lack broader relevance.

Future of AI Benchmarking

Efforts are currently underway to develop more effective tests, such as the ARC-AGI benchmark and Humanity’s Last Exam. As the field progresses, we can expect to see more refined evaluations of AI performance, while we continue to enjoy entertaining GIFs of bouncing balls in rotating shapes.