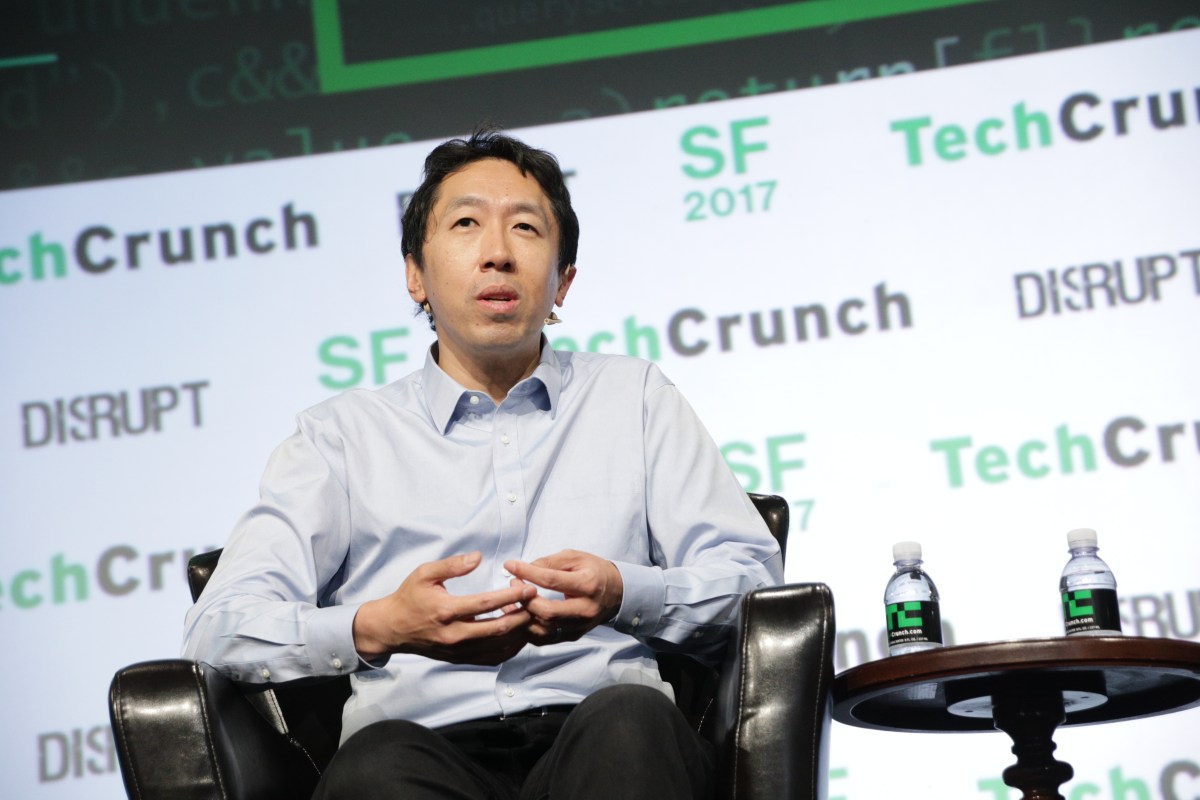

Andrew Ng Celebrates Google’s Decision to Abandon AI Weapons Pledge

Andrew Ng, a prominent figure in the AI community and former leader of Google Brain, has publicly expressed his support for Google’s recent decision to retract its commitment against developing AI systems for military applications. This pivotal change has sparked discussions about the role of AI in national security and warfare.

Google’s Shift on AI Weapons Development

In a significant move, Google has removed a seven-year-old pledge from its AI principles page that vowed to refrain from designing AI for weapons or surveillance. This announcement was accompanied by a blog post from DeepMind CEO Demis Hassabis, advocating for collaboration between corporations and governments to create AI that enhances national security.

Background on Google’s AI Weapons Pledge

The original pledge was established in 2018 following the backlash against Project Maven, a U.S. military initiative that utilized AI to analyze drone footage, leading to protests from thousands of Google employees. Many were concerned about the ethical implications of using AI in military operations.

Ng’s Perspective on Military Support

During a recent interview at the Military Veteran Startup Conference in San Francisco, Ng expressed his confusion over the protests against Project Maven. He emphasized the importance of supporting American service members, stating, “How the heck can an American company refuse to help our own service people that are out there, fighting for us?”

AI Regulation and Competition with China

Ng also shared his relief regarding the cessation of two major AI regulatory efforts: California’s SB 1047 bill and President Biden’s AI executive order, which he believed would hinder open-source AI development in the U.S. He stressed that the key to ensuring AI safety in America lies in maintaining a competitive edge over China in technological advancements.

AI’s Impact on Modern Warfare

Ng noted that AI-driven drones have the potential to transform contemporary battlefields, highlighting the urgency of integrating AI into military strategies. His views align with those of other former Google executives, such as Eric Schmidt, who is actively lobbying for the U.S. to invest in AI drones to maintain military superiority.

Internal Divisions at Google Over AI and Military Use

Despite Ng’s and Schmidt’s support for military AI applications, there remains a significant divide within Google. Meredith Whittaker, president of Signal and a key figure in the Project Maven protests, expressed her opposition to Google’s involvement in warfare, asserting that the company “should not be in the business of war.”

- Geoffrey Hinton, a Nobel laureate and former Google AI researcher, has advocated for global prohibitions on AI in weapons.

- Jeff Dean, chief scientist of DeepMind, previously signed a letter opposing machine learning in autonomous weapons.

In recent years, both Google and Amazon have faced criticism for their military contracts, particularly the Project Nimbus deal with the Israeli government, where employees protested against the provision of cloud services to military forces.

The Future of AI in Military Applications

As the Pentagon and other military organizations express a growing interest in AI technologies, major tech companies are investing heavily in AI infrastructure to explore military partnerships. The evolving landscape of AI in defense raises important questions about ethics, safety, and the responsibilities of technology companies.

For more information on AI’s implications in warfare, check out this credible source on AI ethics and robotics.