Breakthrough in AI Scaling: New Method Unveiled, but Experts Urge Caution

Recent discussions on social media have sparked curiosity about a potential new AI “scaling law.” However, experts in the field approach these claims with skepticism. AI scaling laws describe the relationship between the size of datasets, computing resources, and the resulting performance of AI models. This article explores the evolution of these laws and the implications of the newly proposed “inference-time search.”

Understanding AI Scaling Laws

Traditionally, the concept of AI scaling laws has centered around pre-training, where larger models are trained on increasingly extensive datasets. This practice has dominated the landscape of AI development, especially among leading research labs. However, recent advancements have introduced two supplementary scaling laws:

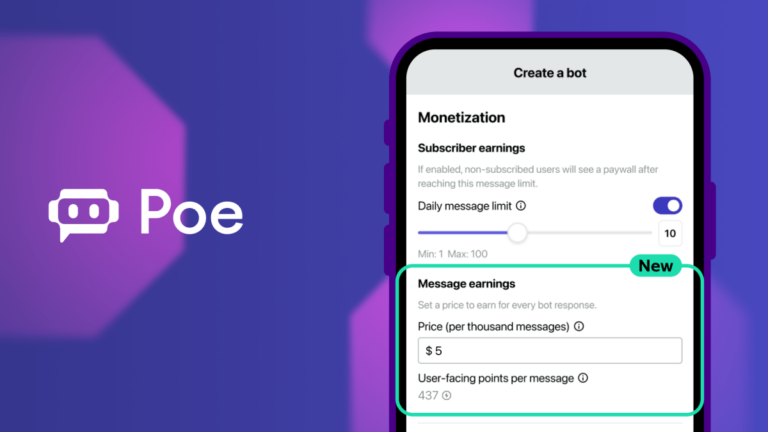

- Post-training scaling: This involves fine-tuning an AI model’s behavior after its initial training.

- Test-time scaling: This refers to allocating more computing power during inference, which enhances the model’s reasoning capabilities.

Introducing Inference-Time Search

In a recent paper, researchers from Google and UC Berkeley proposed what some are calling a fourth scaling law: inference-time search. This innovative approach allows a model to generate multiple answers to a query simultaneously, selecting the most appropriate one from the options. According to the researchers, this method can significantly improve the performance of existing models, such as Google’s Gemini 1.5 Pro, allowing it to outperform OpenAI’s o1-preview model in specific benchmarks related to science and mathematics.

Key Insights from the Research

Eric Zhao, a doctoral fellow at Google and co-author of the paper, highlighted the findings on social media. He noted:

“By just randomly sampling 200 responses and self-verifying, Gemini 1.5 — an early 2024 model — beats o1-preview and approaches o1. The magic is that self-verification naturally becomes easier at scale!”

This suggests that larger pools of potential solutions can actually facilitate the identification of correct answers, which runs counter to conventional expectations.

Expert Opinions on Inference-Time Search

Despite the promising results, several experts remain cautious about the practical applications of inference-time search. Matthew Guzdial, an AI researcher at the University of Alberta, expressed concerns about the method’s effectiveness:

“If we can’t write code to define what we want, we can’t use inference-time search. For general language interaction, this approach is not particularly effective.”

Furthermore, Mike Cook, a research fellow at King’s College London, echoed these sentiments, stating:

“Inference-time search doesn’t elevate the reasoning process of the model; it merely circumvents the limitations of a technology that can make confidently erroneous mistakes.”

The Future of AI Scaling Techniques

The skepticism surrounding inference-time search highlights the challenges faced by the AI industry in scaling reasoning capabilities efficiently. As noted by the paper’s co-authors, currently, reasoning models can incur substantial costs—often thousands of dollars—just to solve a single mathematical problem.

As researchers continue to explore innovative scaling techniques, the quest for more effective approaches in AI development is likely to persist. Stay informed about the latest advancements in AI by visiting our AI resource page.