How OpenAI’s Bot Overwhelmed a Seven-Person Company’s Website Like a DDoS Attack

On Saturday, Triplegangers CEO Oleksandr Tomchuk faced a significant challenge when his company’s e-commerce website went offline. This incident was caused by a distributed denial-of-service (DDoS) attack, which turned out to be the result of a bot from OpenAI relentlessly trying to scrape data from his extensive online catalog.

Incident Overview: DDoS Attack by OpenAI Bot

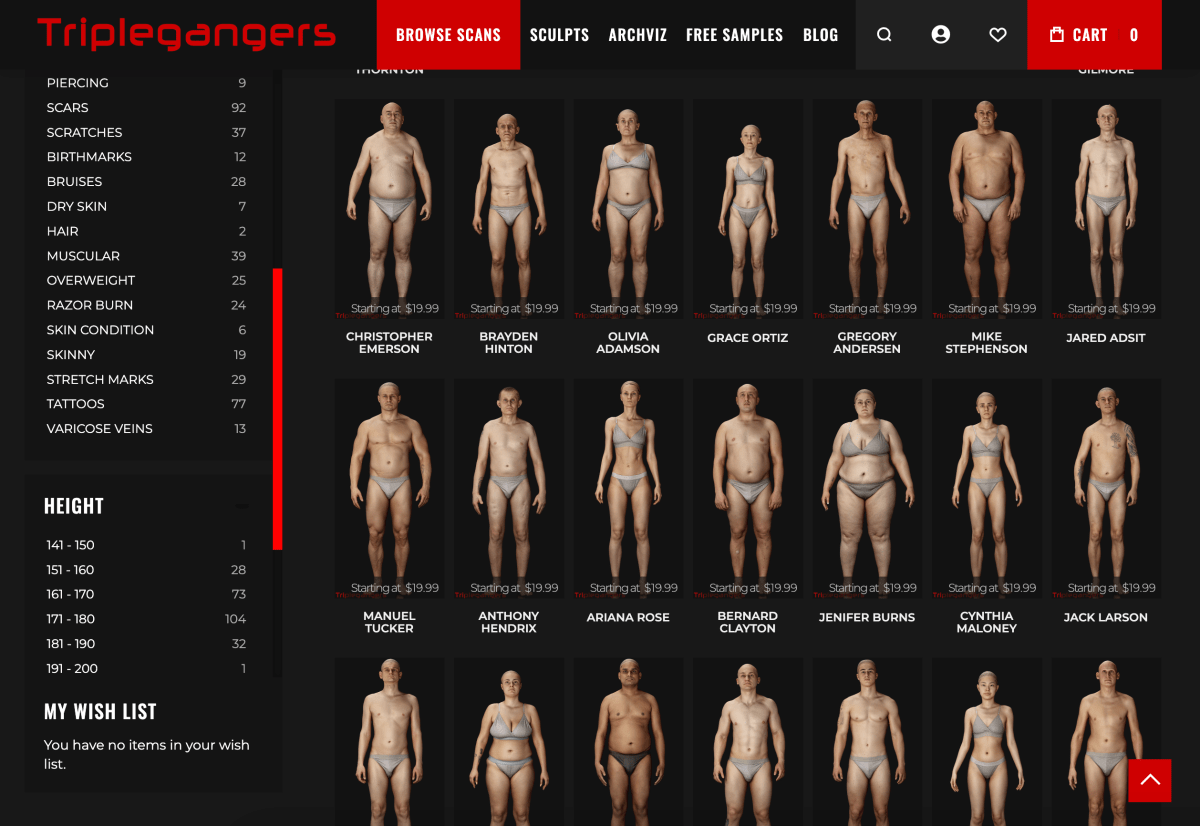

Tomchuk quickly realized that the source of the disruption was an OpenAI bot that was attempting to download a vast amount of information from Triplegangers’ site. He explained, “We have over 65,000 products, each with its own page, and every page contains at least three images.” The bot was sending “tens of thousands” of requests to the server, resulting in hundreds of thousands of photo downloads along with detailed product descriptions.

- OpenAI utilized approximately 600 IP addresses to conduct the scraping.

- Tomchuk and his team are still analyzing server logs to determine the full extent of the attack.

The Impact on Triplegangers

Triplegangers, a small company with only seven employees, has dedicated over a decade to building what it claims is the largest database of “human digital doubles” online. This database includes 3D scanned images of real human models, which are sold to various industries, including video game development and digital art.

Despite having a terms of service page that prohibits unauthorized bot activity, the site’s protection was inadequate. Tomchuk noted that sites need to configure their robots.txt file correctly to prevent bots like OpenAI’s GPTBot from accessing their content. This file, known as the Robots Exclusion Protocol, is designed to inform search engines which parts of a website should not be crawled.

Understanding Robots.txt and Its Limitations

OpenAI states that it respects robots.txt configurations; however, there are limitations. For instance, it can take up to 24 hours for OpenAI’s bots to recognize updates to these files. This delay can leave websites vulnerable in the meantime.

Tomchuk expressed frustration, stating, “If a site isn’t using robots.txt properly, companies like OpenAI assume they can scrape data freely.” Unfortunately, the damage was already done, and Triplegangers faced unexpected server costs due to the excessive activity generated by the bot.

Mitigating Future Risks

By mid-week, after enduring days of bot attacks, Triplegangers implemented a correctly configured robots.txt file and established a Cloudflare account to block the GPTBot and other unwanted crawlers. Tomchuk reported that their site remained stable following these changes.

However, the challenge remains: Tomchuk has no clear way to identify what data was successfully scraped or to retrieve that material. He noted, “I found no way to contact OpenAI for assistance.” Furthermore, the long-promised opt-out tool from OpenAI has yet to be delivered.

Legal Implications and Industry Concerns

The situation is particularly precarious for Triplegangers due to the nature of their work, which involves scanning actual individuals. Laws such as the General Data Protection Regulation (GDPR) emphasize that companies cannot use images of individuals without consent.

Triplegangers’ site is a goldmine for AI crawlers because it includes detailed tagging of images by ethnicity, age, and other characteristics—valuable data for AI training.

A Call for Action: Protecting Online Businesses

Tomchuk wants other small online businesses to be aware of the risks posed by AI bots. “The only way to know if an AI bot is stealing your copyrighted material is to actively monitor your server logs,” he cautioned. The issue is widespread, with many businesses reporting similar experiences with bots crashing their sites.

According to recent research from DoubleVerify, there has been an 86% increase in “general invalid traffic” attributed to AI crawlers in 2024, highlighting the urgency of addressing this issue.

In conclusion, Tomchuk likened the behavior of AI bots to a “mafia shakedown,” asserting that companies should seek permission rather than scrape data without consent. As the digital landscape evolves, it is imperative for online businesses to implement robust measures to safeguard their content.

For more insights into AI and its impact on the digital economy, subscribe to TechCrunch’s AI-focused newsletter delivered every Wednesday.