Meta’s Strategic Revenue Sharing Agreements with Llama AI Model Hosts Unveiled in New Filing

In the ever-evolving landscape of artificial intelligence, Llama AI models developed by Meta have sparked significant interest and debate. Recently uncovered court filings reveal that while Meta CEO Mark Zuckerberg previously stated that “selling access” to Llama models isn’t part of their business strategy, the company does engage in revenue-sharing agreements related to these models.

Meta’s Revenue Model for Llama AI

The recent court document, submitted in the ongoing copyright case Kadrey v. Meta, highlights that Meta “shares a percentage of the revenue” generated by companies hosting its Llama models. This indicates that while Meta may not sell direct access to Llama, it still benefits financially from its partnerships.

Hosting Partners of Llama Models

The court filing does not disclose the specific companies that pay Meta for hosting Llama. However, Meta has publicly acknowledged several partners, including:

- AWS

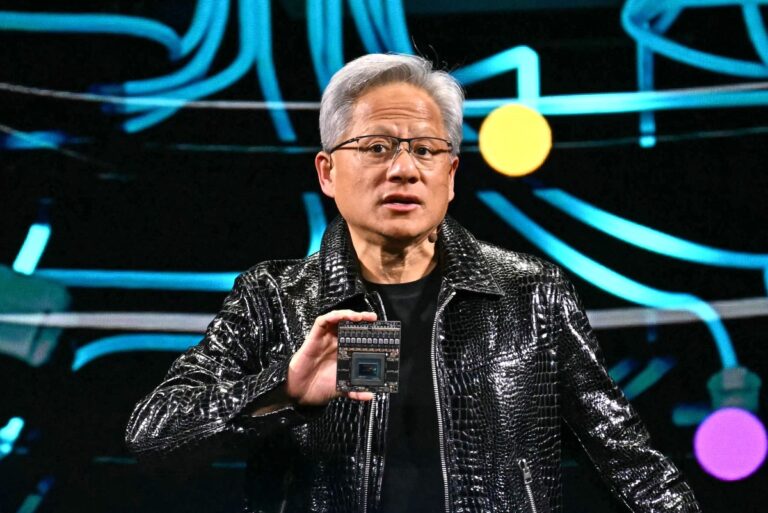

- Nvidia

- Databricks

- Groq

- Dell

- Azure

- Google Cloud

- Snowflake

While developers can utilize Llama models independently, many prefer using host partners that offer additional services and tools to simplify the deployment and operation of these models.

Future of Llama AI and Monetization Strategies

During an earnings call in April, Zuckerberg hinted at potential monetization strategies for Llama, including licensing access and integrating ads within AI interactions. He stated, “If you’re someone like Microsoft or Amazon or Google and you’re going to basically be reselling these services, that’s something that we think we should get some portion of the revenue for.”

Value Derived from AI Community Involvement

Zuckerberg emphasized that a significant portion of the value Meta gains from Llama comes from contributions by the AI research community. Meta integrates Llama models into various products across its platforms, enhancing capabilities like the Meta AI assistant.

“I think it’s good business for us to do this in an open way,” Zuckerberg remarked during Meta’s Q3 2024 earnings call. “It makes our products better rather than if we were just on an island building a model that no one was kind of standardizing around in the industry.”

Legal Challenges and Allegations Against Meta

The allegations in the Kadrey v. Meta case are serious. Plaintiffs claim that Meta not only trained its Llama models using pirated e-books but also facilitated infringement by uploading these materials. They allege that Meta employed covert torrenting methods to acquire e-books for training, consequently sharing these works with other users.

Investment in AI and Future Developments

Looking ahead, Meta plans to significantly increase its capital expenditures, with forecasts suggesting investments between $60 billion and $80 billion in 2025. This budget is primarily aimed at enhancing data centers and expanding AI development teams.

To offset some of these costs, Meta is reportedly exploring the launch of a subscription service for Meta AI, which would introduce new capabilities to its assistant.

For more insights into the evolving landscape of AI and its implications, visit our related articles on AI Trends and Meta’s AI Developments.

Updated on 3/21 at 1:54 p.m.: A Meta spokesperson directed TechCrunch to the earnings call transcript for further context, including Zuckerberg’s comments about revenue-sharing with major Llama model hosts.