Revealing the Truth: New Research Shows AI Struggles with Historical Accuracy

Recent research reveals that while AI, particularly large language models (LLMs), demonstrates remarkable capabilities in various tasks, it significantly falters when tackling advanced historical questions. This study introduces a new benchmark called Hist-LLM, designed to evaluate the performance of leading LLMs such as OpenAI’s GPT-4, Meta’s Llama, and Google’s Gemini in historical accuracy.

Understanding the Hist-LLM Benchmark

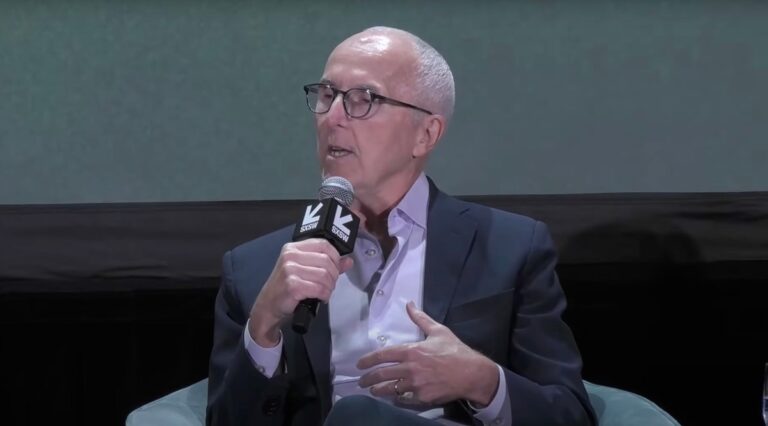

The Hist-LLM benchmark assesses the correctness of answers provided by LLMs against the Seshat Global History Databank, a comprehensive repository of historical data named after the ancient Egyptian goddess of wisdom. This evaluation was presented at the prestigious NeurIPS conference last month.

Disappointing Results from Leading LLMs

According to researchers from the Complexity Science Hub (CSH) in Austria, the findings were underwhelming. The most successful model, GPT-4 Turbo, achieved only around 46% accuracy, which is not significantly better than random guessing.

Maria del Rio-Chanona, co-author of the study and associate professor at University College London, stated, “The main takeaway from this study is that LLMs, while impressive, still lack the depth of understanding required for advanced history. They’re great for basic facts, but when it comes to more nuanced, PhD-level historical inquiry, they’re not yet up to the task.”

Examples of Historical Misunderstandings

The researchers provided sample historical questions to illustrate the shortcomings of LLMs. For instance, when asked if scale armor existed in ancient Egypt during a specific timeframe, GPT-4 Turbo incorrectly affirmed its presence, despite the technology emerging 1,500 years later.

- Incorrect Responses: GPT-4 claimed that ancient Egypt had a professional standing army during a certain period, while the correct answer is no.

Challenges in Historical Knowledge Retrieval

Why do LLMs perform poorly on specific historical inquiries despite excelling in complex topics like coding? Del Rio-Chanona suggests that LLMs often rely on prominent historical narratives, making it challenging to access more obscure historical facts.

“If you get told A and B 100 times, and C 1 time, and then get asked a question about C, you might just remember A and B and try to extrapolate from that,” she explained.

Bias in Training Data

The study also uncovered trends indicating that OpenAI and Llama models performed worse in answering questions related to regions like sub-Saharan Africa, hinting at potential biases in their training data. Peter Turchin, who led the study, emphasized that these results demonstrate that LLMs are not yet a viable substitute for human expertise in certain domains.

Future Prospects for LLMs in Historical Research

Despite the shortcomings highlighted in this study, researchers remain optimistic about the potential of LLMs to assist historians in the future. They are committed to refining the Hist-LLM benchmark by integrating more data from underrepresented regions and formulating more complex questions.

“Overall, while our results highlight areas where LLMs need improvement, they also underscore the potential for these models to aid in historical research,” the study concludes.

For more insights on AI’s role in various fields, check out our article on AI in Research and explore how technology is reshaping the academic landscape.